The computational principles underlying neural function are largely unknown. Work in our laboratory combines computational principles with neurophysiological and behavioural analyses to further our understanding of neural information processing. There is a growing recognition that the basic function of the brain is to estimate or predict important aspects of the world for the purpose of selecting motor outputs (decision-making). Our research seeks to develop a general theory of brain function and to understand how neurons can achieve the general goal of the brain in learning to make accurate predictions.

A General Computational Theory of the Nervous System

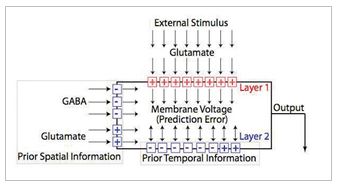

Although the computational principles underlying information processing in the brain are largely unknown, a general theory was recently published (Fiorillo, 2008). Research in our laboratory seeks to further develop and test this theory. According to the theory, the general function of the brain, and of each single neuron, is to learn to make accurate predictions about important aspects of the world,. According to the theory, each neuron learns in the same manner (through associative Hebbian and anti-Hebbian plasticity) to predict the state of a small part of the world. However, because each neuron develops in a unique environment, each neuron naturally acquires its own distinct information about a distinct part of the world, depending on the statistical patterns in the inputs to which the neuron is exposed. If the theory is correct, it could potentially allow us to progress towards the creation of artificial neural networks that possess the intelligence of biological nervous systems

A schematic illustration of the proposed computational function of a single generic neuron(Fiorillo, 2008). The output of the neuron corresponds to prediction error, or the difference betw een current and prior information. The neurons selects its own inputs. Hebbian plasticity mechanisms select amongst those individual inputs contributing current information in order to maximize the prediction error. Anti- Hebbian plasticity mechanisms select amongst those individual inputs contributing prior information in order to minimize the prediction error.

Our current research has two main goals. First, we seek to develop a new approach to the concepts of information and probability within neuroscience in order to provide the mathematical and philosophical foundations for a general theory of brain function

(Fiorillo, 2012; Fiorillo, 2010). This effort is focused particularly on how to apply the pioneering work of E.T. Jaynes on probability theory to the brain and to single neurons. Our second major goal is to experimentally test the published theory (Fiorillo, 2008). If the theory is correct, we should be able to predict and explain the properties of ion channels and synapses given knowledge of natural patterns of brain activity. We are experimentally testing this through electrophysiological recordings of single neurons in brain slices, and through computer simulations (Hong and Fiorillo, 2011; Kim and Fiorillo, 2011).

Dopamine Neurons

In addition to exploring the general computational theory described above, a second component of the work in our laboratory examines the physiology of midbrain dopamine neurons in behaving animals. According to the theory summarized above, as well as other accounts, the neural development of goal-directed behavior requires one or more reward signals that shape neural circuitry. A group of neurons in the midbrain that contain the neurotransmitter dopamine are thought to provide such a reward signal. Dopamine neurons are well known to be of primary importance in drug addiction, Parkinson’s disease and schizophrenia. Starting around 1980, dopamine became known to the public as the “pleasure chemical,” although we now know that this description is rather simplistic and misleading. Physiological studies have shown that dopamine neurons are activated when reward value is better than expected, and their activity is suppressed when reward value is worse then expected. Thus dopamine neurons are said to encode a “reward prediction error.” Prior to this physiological discovery, such errors were already used to drive learning in models of reinforcement learning, in both machines and animals. Based on the apparent correspondence between theory and physiology, as well as a large body of pharmacological evidence, it is believed that dopamine may function to teach the brain to distinguish what is “good” from what is “bad” by modulating neural plasticity.

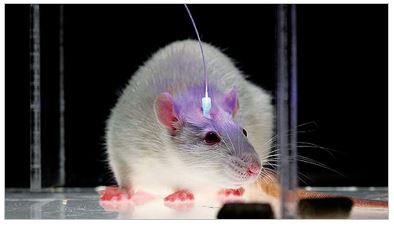

Whereas past work has used electrophysiological recordings of dopamine neurons in behaving animals to characterize their responses to natural reward stimuli (for example, Fiorillo et al., 2008; Fiorillo, 2011), current work tests theories of dopamine’s effects on behavior by using new optogenetic methods in mice (Kim et al., 2012). This allows us to use optical stimulation to mimic the nature “reward prediction error” of dopamine neurons We have found that stimulation lasting only 0.2 seconds is sufficient for mice to work for it, which confirms a theory of dopamine’s role in reinforcement learning. This new technique makes it possible to test many hypotheses about dopamine function.

- 1. Fiorillo CD, Kim JK, Hong SZ (2014) The meaning of spikes from the neuron’s point of view: predictive homeostasis generates the appearance of randomness. Front. Comput. Neurosci. 8:49. doi:10.3389/fncom.2014.00049

- 2. Fiorillo CD (2013) Two dimensions of value: Dopamine neurons represent reward but not aversiveness. Science 341, 546-549.2

- 3. Hong SZ, Kim HR, Fiorillo, CD, (2014) T-type calcium channels promote predictive homeostasis of input-output relations in thalamocortical neurons of lateral geniculate nucleus Front. Comput. Neurosci. 8:98 doi: 10.3389/fncom.2014.00098